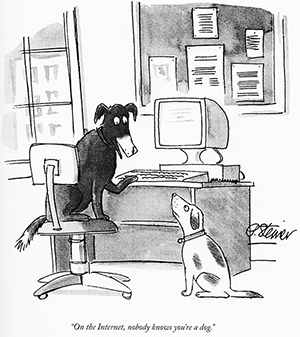

People like me who is working on internet identity space is trying to solve so called “Internet Dog Problem.”

You surely must have seen this picture — InternetDog.jpg : On the internet, nobody knows you’re a dog.

This is a hard enough problem that we have long been trying to solve.

At the same time, to promote the identity federation and API economy, privacy problems also had to be solved, so identirati has long been trying to solve the privacy problems. Things like psudonymous identifier and partially unlinkable authentication etc. are prime example of such things. But above all, the most important thing is to how to get a meaningful consent and we have been trying to solve it. The consent screen you are so familiar with these days were actually created on the way.

Since a few years ago, however, I started to doubt if that is a good model. Do people read the consent screen? Do they read privacy policy and terms of service? Of course not. Then how could such a consent screen be meaningful? Are we not just training the users to click “accept”.

And here comes the “explicit consent requirement” that EU promotes. Oh, no. That’s a disaster.

We are now turning the Internet dog into Pavlov’s Dog.

We are doing the Pavlovian conditioning to the users to click “accept”. Then, the attackers can easily hide the privacy attack in the forest of the seemingly benign privacy policy clauses and have the user click “accept”.

Actually, I would think that the current consent model is completely broken.

The direction of the consent is the reverse of what it should be.

It should be the service providers who agrees to the individual’s privacy policy because it is us who is letting them use our personal data.

One way of implementing it is to create a small set of consent like Creative Commons license. Typical one would be something like:

Minimal – No Share – Single Transaction (min-no-1 license)

standing for

- Minimal – minimal data (only the minimum amount of data is requested to fulfill the transaction)

- No sharing – do not give the data except for outsourcing to a data processor, in which case the data controller should have full responsibility for it;

- Single Transaction – The license to the personal data is valid only for this transaction thus once the transaction is complete and some required retention period has passed, the data will be deleted;

It can be graphical like

The user can set this preference in the identity provider. If the relying party / service provider agrees to it, the consent screen MUST NOT be shown. It removes the friction for the service provider, and prevents the user trained as a Pavlov’s Dog.

This way, if the consent screen type of thing appears, user will know that something unusual is happening and they should be very careful about it.

Now then, the problem becomes of the issue of whether the service provider tells a truth. For example in min-no-1 license, does the service provider really only asking for the minimum data?

There comes the assessor. Accredited assessor of a Privacy Trust Framework shall examine the request and determines if it complies to min-no-1 license. If it does, then s/he will sign the request file. Then, the service provider can register the location of the file to the IdP. The IdP fetches the file, validate the signature, and decide whether it is good for its user. If it determines so, it will let the request to come.

Is there a protocol that achieves it?

Yes. OpenID Connect. OpenID Connect has a facility for registering something called request_uri. This is exactly what is described above. So the technology is there. What we do not have is the policy template and the trust framework that assesses the service provider requests.

I agree with the problem of click training. One of issues of it may be caused by clicking just “yes” which is the only choice for anyone who wants to use it. Therefore, it is good idea that the choices must be provided as a small set of consent instead of just “yes”. In that case, the set might need to be standardized, ideally as an international standard. It may be good place for us to use SC27 of ISO/IEC JTC1 😉

I totally agree. Even if the service provider chooses to ask extra thing so that the consent screen appears, users should be given an option to go min-no-1 like minimum license. The level of service may get lower for that user, but that is ok.

By the way, I feel that if the service provider asks “extra”, it is almost always the extra actually is the main purpose of the transaction. So, instead of saying that “We offer this game. By the way, can you give me your contact address as well?”, it has to say “We are building our marketing contact list. If you give me your contact address, we will give you the right to play this game in return.”

Part of the problem with “click training” is structural: the fact that the other parties to the transaction have already worked everything out by the time the individual gets there. In other words, the “click” is an acceptance of terms, rather than letting the individual be the initial offerer of terms, so the terms are never going to be optimized for that individual. I did some analysis of what would have to change in order to remove this structural issue in this W3C position paper a while back (spoiler alert…UMA is involved): http://www.w3.org/2010/policy-ws/papers/18-Maler-Paypal.pdf

Right. That’s one of the reason that we stated in the Personal Data WG at METI that the direction of the consent is reversed right now. It has to be the services agreeing to the terms of the use of the personal data.

Click training is a huge problem, but I also have concerns over too much auto-sharing without an architecture for people to correct their mistakes. We need an undo capability, a “whoops” button. Because sometimes you’ll wander into a website that you really don’t want to share data with.

This requires a much more complicated commitment on behalf of the data recipient, but otherwise we risk endless phishing / spamming / goatse technique for getting user information by luring them to sites they really don’t want a relationship with…

Right. Consent Revocation is a “given” in my mind. At the same time, if it is “Single Transaction”, then the consent is gone after it. It does not stay.

Also, note that I am actually suggesting that the request being vetted by a trusted third party. So the request has to be sensible to start with, and if the relying party start breaking the promise, it will be kicked out of the trust framework that they will be no longer able to make a request.

Nice one Nat, glad to hear that the technology is falling into place for this. That small set of agreements as per Creative Commons was the start point for the work on Standard Label, so that is also coming together.