The COVID-19 pandemic forced many of us to migrate to the cyber-continent at unprecedented speed. It was so sudden that we were not well prepared for it. Up until then, a lot of social constructs were implicitly based on the limitations of our physical existence. It was not possible to instantly appear in a meeting room from a distance in the physical world. You can, in the cyber world, but we lack adequate protection and capability to achieve what we can do in the real world.

One of the concepts that I believe useful to contemplate around these issues is “Digital Being”.

Digital Being is our representation in the Cyber-space. We, as physical beings, cannot get into the wires and act directly. Instead, we have to establish a digital version of ourselves and act according to our direction. Any communication is done through this digital being in cyberspace.

To Live in cyberspace, we need to establish our Digital Beings.

It is clear then that we have to be warranted to be able to create and control Digital Beings. I would say:

Right to have Digital Being.

It is a mirror concept to Right to Life in the real world. If you cannot have a Digital Being, you cannot live in the digital world. So, anyone should be able to establish and re-establish one’s trusted digital being. For the communication to be trusted and secure, each entity needs to be able to identify each other with the “digital name” and open secure communication channels between them. That means

- Digital beings for a person need to be linked and controlled by the physical person.

- Digital Beings must be capable of being authenticated by the party it is communicating with.

- They need to be able to send and receive signed messages among themselves.

- If a dispute occurs, the person controlling the digital being must be accountable for it.

- When one’s digital being fails (e.g., for technical or operational reasons), he should be able to re-establish it.

Mind you. This is not warranted yet. Many people still do not have access to the internet, and as a result, they do not or cannot have Digital Being. So, this aspect needs to be addressed, but I am not doing it today. Instead, I am focusing on the desirable properties of Digital Being. It is still a work in progress, but I have summarized it in the following seven principles:

- Accountable Digital Being

- Expressive Digital Being

- Fair Data Handling

- Right NOT to be forgotten

- Human Friendly

- Adoption-Friendly

- Everyone benefits

Let me go over one by one.

- Accountable Digital Being

I don’t think you go out into the city centre and suddenly start shouting random things or slandering people. This is because you will be made to explain why you did what you did, and you will be held responsible for the consequences of your actions.

In other words, we are “accountable” in the real world, and we don’t do stupid things in public. Of course, some people do, but they are so rare that they are featured on news programmes.

In contrast, what about the current state in cyberspace? Do not people think they are hiding in a safe place, that they will not be identified, slandering people and shouting conspiracy theories aloud? Of course, if you probably are not. But it is a fact that many people do. We have seen many cases, and one of the symbolic cases is the death of a 23-year-old reality TV actress who was stormed by a mob on social media accusing her of acting in that TV programme. She was psychologically cornered and chose to end her life.

Such a mechanism would allow a person who has committed an unlawful act that is injurious to others to have his or her anonymity lifted and to be able to

(1) give an account of what they have done

(2) provide evidence that the act was justified

(3) be punished if it is shown from (2) that the act was not justified.

In other words, accountability is required. The more people are made aware of this, the more they will consider whether or not the actions they are about to take part in – such as flaming – are really socially acceptable. People who are involved in flaming incidents are often doing so out of “their own justice”. In other words, they are carrying out a “private justice” and deriving pleasure from it. However, when users tried to send an offensive message and the ReThink app asked them if they were sure they wanted to, 93% of the teenagers involved were discouraged from doing so.

On the other hand, in a context where this kind of responsible digital existence is becoming more and more common, not having it can lead to social exclusion. Therefore, we need to be able to establish it whenever we want and to re-establish it if we leave it for any reason.

Some aspects of this requirement are often discussed in the context of DID. However, you can see that it is not a purely technical construct. It will likely involve the notion of trusted parties identity proofing and registering the person in some form or manner. At the same time, there needs to be a technical measure that helps it. I am looking forward to that cryptographers would be able to assist in this respect.

2. Expressive Digital Being

This principle says that a Digital Being should make it possible to communicate what kind of person you are to others by using attested claims about your characteristics. ===

Harvard Study of Adult Development is known to be the longest-running study exploring the source of happiness. It has been following up panel since 1938. The first batch in the study was 268 second-year students in the Harvard, and 19 was still alive in 2017. The second group was chosen from the youngsters in extreme poverty. Most of them lived in apartments without running water.

At the outset of the study, these teenagers were interviewed, went through a health check, their parents were also interviewed. They became adults and pursued various lives. There were factory workers, lawyers, doctors and so on. One of them became the 35th president of the United States: John F. Kennedy.

Eventually, 1300 additional children were included in the study. In the 1970s, 456 inhabitants in the central Boston area was added, too.

They continued to fill the questionnaire every two years and received interviews. What was made clear from the 10s of thousands of pages of information was this: ✓

Good relationships keep us happier and healthier.

It was not fame or wealth. It was the relationships, especially with those you are close with.

Then the question becomes: How do we build relationships?

It is by expressing ourselves to the people we want to have good relationships with.

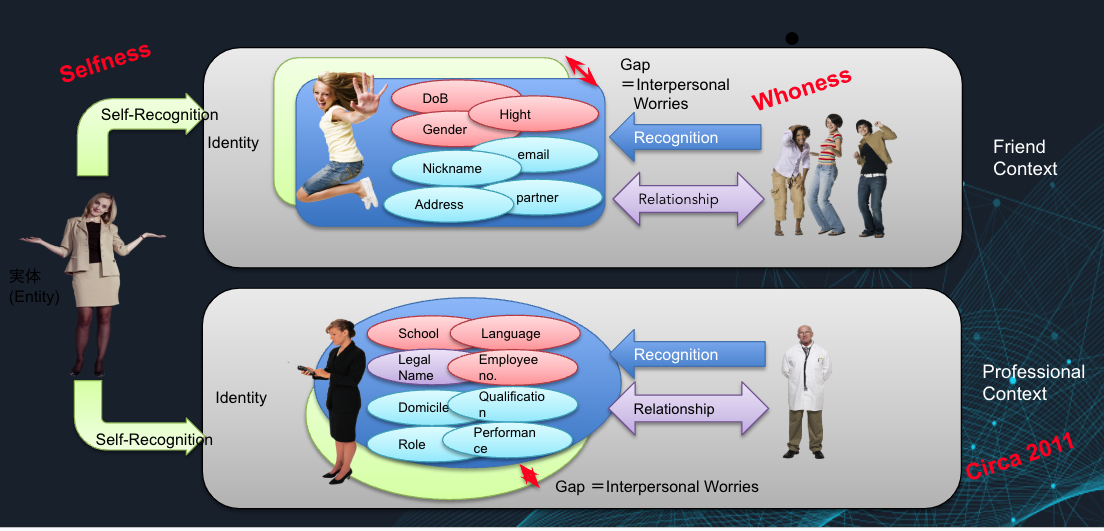

This figure shows you on the left as the entity trying to build good relationships with various people. The upper side is with her friends, and the bottom side is with her boss. There are many more contexts like these, but I did not feel particularly useful as you can extend the argument to any number of context, so here are just two.

You have your self-image that you want others to perceive you as. Sometimes, it is called “identity”.

Now this self-image is an abstract object that your friends or boss cannot perceive directly. In real life, you project it onto them by providing the information on how you dress, what scent you wear, how you speak, where you live, your partner choice, and so on – that is, you expose various attributes about yourself and the recipient will indirectly obtain your self-image.

Now, this projection is not always successful. You cannot control how these attributes, or identity, is being perceived by the receiving end. So, there always is a difference between the self-image, or “identity”, that you want them to perceive and what they perceive. You try to minimise the gap by continuously providing the receiver with other attributes. When this does not go well, and the gap remains large, it results in interpersonal problems and causes unhappiness.

Now, let’s take a moment to look at the data leakage case. For example, suppose that an attribute that was only provided in the professional context leaked and was made available to the friendship context above. Since the gap was minimized to start with, this will likely enlarge the gap. This is what we know of as “Privacy Invasion”. It makes this person less happy.

It should be clear by now how important it is to be able to project attributes selectively to receivers depending on the relationship context. I am calling this ability “Expressiveness”, and the second principle of “Expressive Digital Being” is about this property. Without it, we will not be able to optimize the relationship.

It has several implications.

- One needs to be linked to and control their Digital Being.

- One need to be able to authenticate the party they are communicating with, and vice versa.

- One needs to be able to provide fine-grained selective disclosure of the attributes.

OpenID Connect that I helped create is a technology that enables something like this. It is a selective disclosure mechanism, albeit it is almost entirely dynamic. There are two kinds of model:

- Aggregated Claims Model

- Distributed Claims Model

In both modes, there are multiple Attribute Providers, which in OpenID Connect is called Claims Providers. This is because authoritative sources of these attributes/claims are decentralized. For example, while the City Office is authoritative on your registered address, your employer is authoritative on your employment record, and your university is authoritative on the degree that you have obtained. They will never be made into one. There always are going to be many of them. Thus, there are many attributes providers.

Identity Provider is the party that controls the overall flow. In some jurisdictions, it is called “Consent Manager” as it obtains consent from the person/user to provide the attributes to the client or receiver. I do not particularly like the name as the role of the individual is passive, by the way. I would like a more active role by the user that user orders or instructs the Identity provider to project the attributes for them. Thus, I like the term Identity Provider better than Consent Manager.

Anyways, … getting back to track …

In this model, the identity provider, C, dynamically gathers the claims that the user wants to provide to the client, D, as signed claims. Then, assemble them with the claims that it is authoritative, for example, the user authentication result, and signs them and provides it to D. To make this possible, the user has to go through the setup phase where they grant A and B to provide the claims about them to C. A and B have to authenticate U at the point. As the result of the grant, C obtains access tokens Ta and Tb respectively for A and B. When D asks for claims a and b, C first asks U if it can be and after obtaining the permission, goes to A using Ta to obtain signed claim set a, then repeats the same thing to B. Note that user identifier of U at A and B are likely different. To prove that a and b are about the same subject, U, it either has to contain a common user identifier or nonce. Both A and B may be able to attest many claims, but for collection minimization, it should only attest the minimal claim set. In OpenID Connect, since everything is dynamic, that is, a and b are minted at the time of the request by D, this is easy. The gathered signed claims with the same nonce will be aggregated into this response and provided to D so that D can verify the signature on a, b, and c.

Note I said that everything is dynamic in OpenID Connect. We opted to take care of the 80% of use case that way. There are use cases that are not fulfilled by this approach, namely when A and B can become offline and the claims cannot be obtained dynamically. For example,

- when you want to provide attested claims from a party that is no longer exists

- When A is only willing to provide static document encoding the attributes

Under these circumstances, U need to store the signed claims set that exceeds what D may want. Now, the question becomes more complex than before. You need to be extract only the claim D wants and still be able to prove that the claim was not tampered with. If I understand correctly, the mobile drivers license per ISO/IEC 18013-5 tries to deal with this problem. It takes the salted hash of each attribute values and essentially concatenates them and signes over them. When providing only one claim, it C can provide the value of that claim together with the hashes of other claims and signature over them so that D can verify that the attribute value matches the corresponding hash and the entire document is not tampered with. This is neat, but is losing one privacy characteristics if I am not mistaken. These hash values acts as omni-directional identifiers: the receivers can correlate the arrival of the user at different instances or different receivers if they collude. If you could come up with some cryptographic magic to mitigate this correlation problem or solution to selective disclosure form a static document stored at C, the entire identity community will rejoice, in my humble opinion.

Distributed claims model works similarly but now the claims are not provided through C but D obtains them directly from A and B. This works better in the case where D needs to be continually updated on claims a and b and when C can be largely offline. Unlinkability of D from A and B is lost in this case.

3. Fair Data Handling

In this way, individuals seeking their own well-being will actively and selectively give out their data. However, in order to do this with confidence, they need to be able to trust that the data they give out will be treated fairly. You don’t want the information that you gave to a friend saying it is only between us to be shared with all the other friends. Similarly, if you give out information only to one company and it gets leaked to the public, that’s not good. The discrepancy between your self-image and that of other persons, which has been minimised so far, will widen.

The unauthorised publication or disclosure is just one example of improper handling. There are many more examples of inappropriate processings. Any handling that is outside the scope of what the individual concerned expects, or that could cause harm to the individual, is inappropriate handling.

In recent years, this aspect has often been discussed in the context of Centralized v.s. Decentralised Identity, but from my point of view, it is missing the point. What is important is whether the data handler is a data controller or data processor. When folks talk about Centralized, they kind of imagine Big Techs. They are data controllers. Thus, they use the data as they need. And they contrast with the smartphone wallet based implementation as decentralized. In this case, the wallet is a data processor so it processes the data only according to your instructions.

However, when you think twice, the topology actually does not matter. If the wallet provider is a data controller, your control is actually gone, while if the Centralized IdP is acting as a data processor under your control, a large part of the problem is actually gone.

From this, we can see that the issue is about who retains the control, how transparent the processing is, and whether an appropriate practice is followed by the processor.

In order to be considered appropriate, the privacy principles must be adhered to, for example, in accordance with a privacy framework such as ISO/IEC 29100.

To ensure that the processing does not go beyond the expectations of the individual concerned, they need to communicate to the individuals exactly what they intend to do. This is where the Privacy Notice comes in. The standard for this is ISO/IEC 29184, of which I was a project leader.

For individual systems, it is also necessary to develop a system for risk assessment and stakeholder approval using the Privacy Impact Analysis (PIA) framework. The PIA will involve external stakeholders and the publication of a PIA report, which will provide a degree of transparency and monitoring of the operation to third parties.

In order for this kind of legitimate handling of data to become more widespread, there will need to be not only legislative pressure but also social pressure. In this sense, the onus is on each and every one of us as members of society.

4. Right NOT to be forgotten

This may sound strange as “Right to be forgotten” has been heard so many times, but this is not a mistake. This in fact is a flip side of the Right to be forgotten, and I believe that it forms a basic Digital Being right in cyberspace.

Let me pose this question.

When does a person really die?

Of course, when the heart stops beating, that is one death, but this question is not talking about that. The classic answer is

When the person is forgotten by everybody.

The corollary of this for the people who migrated to the cyber continent is “When all of their data is deleted.”

Naturally, it should be allowed to disappear if one so desires, but otherwise, it should be possible to have the record of Accountable Digital Being to remain.

In addition to physical extermination, social extermination also works as a potent way to exterminate a person in the cyber continent. This is done by rewriting a person’s attributes to make them socially undesirable in the eyes of the mainstream of the day. In modern Japan, this can be done by labelling a person as a paedophile or a misogynist and then spreading the word as such. Even if you don’t go that far, simply deleting your university graduation record and declaring you a fraud would be damaging enough.

A digital being needs to be able to counter these attacks. It needs to be able to store attributes about itself that are signed by the issuer of those attributes. The issuer may later claim that the key used for the signature was not there, so this must also be recorded in an objectively unfalsifiable form.

In view of this, it is important to store the signer’s key in a time-stamped and widely stored repository, such as the blockchains used in various economic activities, so that anyone can later verify that the signature key was valid at the time, or, if the need arises It should be an option to write your attributes in such a place so that an attacker cannot delete or change them. This way, even if the physical entity in the real world is deleted, it will remain as a memory in the cyber continent.

Now, how do we achieve it?

It is often claimed that Decentralised Identifier, DID, and Verified Claims with Wallets on smartphones as IdP would solve this problem. Recently announced European Digital Identity Regulation proposal also talks about “Wallets” so it may be thinking similarly. However, I am actually dubious of such claims.

True, if the IdP is run by yourself, it will not ban you. But if the IdP is implemented as an App, then the platform provider can ban that app. That will remove your access to the identity holder and that’s almost the same as account banning. We had a prominent example in the game sphere a few years ago.

(source) Fortnite: Nineteen Eighty-Fortnite https://www.youtube.com/watch?v=euiSHuaw6Q4

If that was in the identity wallet sphere — just imagine it. They can just find the app saying that it does not support the platform identifier that it violates the developer agreement. It’s exactly the same situation.

Also, unless you code your App yourself, you would not know whether that App is trustworthy. It may be collecting your data in disguise. Such examples abound.

Even if the App provider is not malicious, it may still be dangerous. Suppose that the app provider was using a hacked compiler that includes the malicious code in the resulting binary. The malicious code may extract the claims you store in the App and start selling. It will not be detected by Apple review as there is nothing in the source code that exhibits malicious behaviour. Recently, many apps were removed from the App store exactly because of this reason.

From the point of view of the data receiver, you do not know if the App you are interacting with is legitimate or not. There currently is no standardized way of recognizing the binary identity of the App from the remote, as far as I understand. As there are some proprietary libraries that try to achieve it, what is likely is that each data receivers start providing Wallets. If you look at QR code payment area, this seems to be the case.

This is indicating that requirement 5 is likely not able to be solved by purely technical construct. We probably need legal and business construct as well. The new European Digital Identity regulation proposal seems to be aware of it and is requiring Wallets to be certified.

Also, we could leverage competition laws and telecommunications laws to prevent the ban by the platforms.

But this tactic works only in the case where we have a democratic government. It does not work under an oppressive regime. Let’s consider the case where you became an enemy of the State by becoming a freedom fighter.

What the state is likely to do in the case is ban the identity, wipe the identity, or rewrite the characteristics to smear you.

Actually, even a democratic government may sometimes try to do it. In a democratic government, a higher governance scheme such as constitutional rights may help alleviate such risk in the end. However, under an oppressive scheme, it will not.

Here, technological measures can help. For example, if the keys are time-stamped and if you write those signed claims or credentials into immutable ledgers that are used to buy other economic activities as well, it will become extremely difficult for the government to wipe it or rewrite it.

I hear the cry saying, “Thou shall never write personal data onto blockchain because of privacy! You will not be able to delete the data. Right to be forgotten is violated”.

Well, is it so?

Surely, in normal cases, and under most circumstances, it shall not be.

However, this is an exceptional case. It is an existential case. You are about to be deleted from this world. If writing data about you into the ledger so that it cannot be deleted will protect you from being deleted from the physical world and from people’s memory, what would you do?

Remember my earlier question: When does a person die? When the heart stopped beating? That certainly is a physical death but we do not live only in a physical form. We also live as a memory. Your real death comes when you are deleted from people’s memory or re-painted in the memory of your close friend as a dirty, filthy, liar.

You need to write your personal data into the immutable ledger to achieve your right not to be forgotten.

Right NOT to be forgotten. |

Then, while your physical being may be deleted, your digital being remains. It will put societal pressure onto those powers then.

5. Human Friendly

When we think about information systems, the people who use them should be the first thing we consider. In practice, however, the convenience of the people and machines who manage the system often takes precedence. The result is a system that is “difficult to use” or that “tricks” people.

In thinking about being human-friendly, there are a number of considerations that need to be made about the individual. The main aspects that I usually put forward are:

(1) the asymmetry of information between individuals and legal entities;

(2) The bounded rationality of individuals; and

(3) The existence of socially vulnerable people who are not part of the majority.

The asymmetry of information between individuals and companies means that companies generally have more resources and information available to them than individuals. In an information economy, the one with more information has a trading advantage. As a result, in a purely market economy, the optimal equilibrium is not reached. Therefore, measures are needed to support the individual and reduce the information asymmetry. Third-party evaluations and their publication or a counsellor working for individuals are one such initiative.

The second point, the bounded rationality of the individual, refers to the fact that, due to the limits of our cognitive abilities, we are limited in our rationality, no matter how rationally we try to act. For example, if a rational individual wanted to enter into a contractual relationship with an entity, he or she would have to read and understand the contract, understand the laws and circumstances surrounding it, and then act accordingly. But most people don’t. In fact, it’s impossible. Most people would not be able to read and understand the wording of a contract, and even if they had the ability, it would take them a month to read all the privacy policies presented in a year. The operation of society on the cyber continent should be based on an acknowledgement of this.

For example, if you are providing a service to an individual, your terms of use and privacy notice should be as similar as possible to what one would expect from common sense, and only the differences and important points should be extracted and presented to the individual, with the full text available for later reference. This is also in line with the GDPR as far as I understand.

Some people claim that if the IdP was operated by the individual, then it will not misuse the data. But by now, you are aware that is not the case. We do not know if the App is well-behaving. It could be even easier if we put forward a legal framework to control the “centralized IdP”. What is important here is that the individual become the data controller in principle, and the providers of IdP service become data processors. The topology of the deployment really is not the decisive factor.

It should also be noted that for many people, actively managing one’s IdP is just too much or too dangerous.

The third point is that the existence of marginalised groups means that such non-mainstream people should not be left behind, and that the system should be such that they are not left behind.

Those who stand on the side of the majority tend to mistakenly believe that their common sense is just, or that what they are comfortable with is sufficient. In many cases, however, this leads to brutality against minorities.

Some minorities are minorities in terms of physical characteristics and abilities, others in terms of ideological beliefs and cultural backgrounds. The needs of these people are easily ignored by the majority, out of indifference and sometimes out of a sense of ‘justice’. The new rules of the cyber continent must not allow this to happen. And if we are kind to the minorities, we will surely be kind to the majority, both in terms of perception and user experience.

6. Adoption-Friendly

When a new technology comes along, it is rarely zero-based and completely new, and it rarely replaces the existing one in one single swoop. Usually, they are built on top of the existing infrastructure. In this context, it is important for new technologies to consider their compatibility and connectivity with existing technologies.

Many engineers like a clean slate. They want to entirely replace the systems. That’s a hard sell. Most technological advancement happens incrementally.

Let’s think about the railroads. When electric trains were introduced in the system, they replaced diesel engines with motor-based engines, but they did not replace the rails or stations. It continued to use the existing rail systems.

When automobiles were introduced, they replaced the horses with petrol engines, but it did not need a totally new set of roads. The roads were improved gradually. If they needed new roads to be built, the market penetration would have been much slower.

Also, open technologies are far more likely to spread than closed technologies. This is because an open development process is more likely to attract requests from a wider range of stakeholders, resulting in a wider range of applications, and is more easily disseminated to engineers.

In addition, to ensure interoperability, a common testing platform is needed to ensure compliance with specifications. This can be seen in the case of Open Banking in the UK. Standards are written in everyday languages such as English. However, everyday languages, unlike programming languages, are open to a certain degree of interpretation. As a result, it is not uncommon to produce different implementations that do not connect each other from a standard. In the case of Open Banking in the UK, it often took weeks for a bank to connect with a Fintech in the beginning. The solution was a suite of compliance tests, through which the time taken to connect was reportedly reduced to around 15 minutes.

It’s not just about initial connectivity. Systems are subject to change for a variety of reasons. At these times it is also necessary to check that compatibility is not compromised. If a conformance test suite is in place and used for ongoing development, the risk of incompatibility can be significantly reduced.

7. Everyone benefits

The last principle, “everyone benefits”, is not often mentioned, but it is a very important one. Environmental change that comes at the expense of a few is difficult to implement, and even when it is implemented, it does not last.

When privacy is a priority, it can be easy to focus on strengthening individual rights, and when efficiency is a priority from a business perspective, individual rights can be seen as a distraction. However, any attempt to strike an imbalance is likely to be met with fierce opposition, resulting in a situation where the status quo is maintained or even worsened. It is also unethical to allow the tyranny of the majority to overwhelm the minority and allow the majority to profit.

There is a concept in economics called Pareto improvement. It’s a change where no one is worse off and someone is better off. Fortunately, the use of data is not a zero-sum game. Data is not consumed or lost when it is used, and if used with care, both the companies that use it and the individuals who allow it to be used can benefit. In many cases, Pareto improvements are possible.

Although the figures are a little out of date, a 2016 European Commission report suggests that the increased use of data will account for 5.4% of GDP in the EU27 by 2025.

In order to receive the fruits of this system, individuals, companies and governments must shape it in such a way that they can benefit from it. Otherwise, the system will not be implemented and will not be able to stand as a system.

So, these are the seven principles of Digital Beings.

They are not purely technical, but do involve social, legal and business constructs.

However, there probably is a lot that cryptography can provide.

I am hoping to collaborate with you in the coming days.